GPT Image 2 Prompt Guide

Structured prompting techniques that unlock GPT Image 2's reasoning architecture — from text-heavy designs to multi-element compositions.

GPT Image 2 is built on a reasoning architecture that reads your entire prompt before rendering. This changes how you should write prompts — detail and structure are rewarded, not punished. The model plans the composition before it touches a pixel, which means the way you organize information in your prompt directly affects the output.

This guide covers the prompting techniques that consistently produce better results with GPT Image 2 — from text-heavy product shots to multi-subject editorial scenes.

How GPT Image 2 reads your prompt

Traditional image models process prompts as weighted token bags — earlier words matter more, and complex instructions degrade. GPT Image 2 works differently. Its reasoning-first approach sets it apart from other generators because it parses the entire prompt, identifies relationships between elements, and plans spatial layout before generation begins.

This means a 200-word prompt with precise spatial instructions will produce a more faithful result than a 20-word prompt with vague descriptors. The model does not penalize length — it rewards intentionality.

Prompt structure that works

The most effective GPT Image 2 prompts follow a consistent structure: subject first, then environment, then style, then technical specifications.

- Subject — who or what is in the frame, described with specifics (not "a woman" but "a woman in her 30s wearing a linen blazer, looking camera-left")

- Environment — where the subject exists, with lighting and spatial cues ("standing at a marble kitchen counter, morning light from a window on the left")

- Style — the visual language ("editorial photography, shallow depth of field, warm neutral tones")

- Technical — aspect ratio, text placement, and any typographic elements

This hierarchy matters because GPT Image 2 builds the scene in roughly this order. Front-loading the subject ensures it gets the most compositional attention.

Text rendering prompts

Text in images is where GPT Image 2 separates itself from every other model. To get the best results on PonPon's image studio, follow these rules:

- Spell out every word exactly. The model renders what you write — typos in your prompt become typos in the image.

- Specify font style. Use natural descriptions: "bold sans-serif," "thin serif italic," "handwritten script," "monospaced." GPT Image 2 interprets these as typographic instructions.

- Describe placement explicitly. "Centered at the top," "bottom-right corner in small text," "along the left edge, rotated 90 degrees." Vague placement produces vague results.

- Separate text elements. If your image has a headline and a subheading, describe each independently with its own font, size, and position.

For multilingual text, specify the language explicitly: "Chinese characters reading '欢迎光临' in bold sans-serif across the top." GPT Image 2 handles CJK, Hindi, Bengali, and other non-Latin scripts at the same accuracy as English.

Multi-element scene prompts

Complex scenes with multiple subjects are where most image models break down. GPT Image 2 handles them if you give it clear instructions. Compare outputs in the visual workspace to see how it resolves multi-element prompts versus other models.

The key is explicit spatial relationships:

- Use position language. "Left side," "foreground," "behind the table," "overlapping the right edge." Ambiguous placement produces ambiguous results.

- Number everything. "Three ceramic cups in a row" is better than "several cups." "Six people seated around a circular table" is better than "a group at dinner."

- Specify relative sizes. "A small potted plant on the counter, roughly half the height of the coffee machine beside it."

- Describe lighting direction. "Key light from upper-left, soft fill from the right, no harsh shadows." GPT Image 2 uses lighting cues to establish depth relationships.

Reference-image editing prompts

When uploading reference images for editing, your prompt should describe the change, not the whole scene. GPT Image 2 already sees the reference — it needs to know what to modify.

- Be surgical. "Replace the red car in the driveway with a silver bicycle" instead of re-describing the entire scene.

- Preserve explicitly. "Keep the background, lighting, and all other objects unchanged" reinforces the model's subject fidelity.

- Layer edits. For complex changes, do them in sequence — swap the product first, then adjust the label text, then tweak the lighting. GPT Image 2's subject fidelity holds across rounds.

Style control prompts

GPT Image 2 handles photorealistic and illustrative styles with equal competence. For specific artistic styles like oil painting, watercolor, or ukiyo-e, the widest artistic style range may produce more faithful results — but GPT Image 2 covers mainstream styles thoroughly.

Effective style prompts:

- Photography styles — "editorial portrait photography," "product photography on white seamless," "street photography, 35mm film grain"

- Illustration styles — "flat vector illustration," "isometric technical drawing," "children's book watercolor"

- Hybrid styles — "photorealistic rendering with illustrated UI elements overlaid," "editorial layout with photography and typographic design"

Avoid vague style terms like "beautiful," "professional," or "high quality." These add noise without direction. Specific references produce specific results.

Prompt templates

These templates work as starting points. Modify the bracketed sections for your use case:

- Product shot: "Product photography of [item] on [surface]. [Brand name] logo visible on the label. Studio lighting, [color] background, sharp focus, commercial quality."

- Social media card: "[Platform] post format, [aspect ratio]. Headline reading '[exact text]' in bold sans-serif at the top. [Subject description] as the main visual. Brand color palette: [hex or description]."

- UI mockup: "Mobile app screen showing [feature description]. [Exact placeholder text] in the header. Clean interface design, [light/dark] mode, [design system reference]."

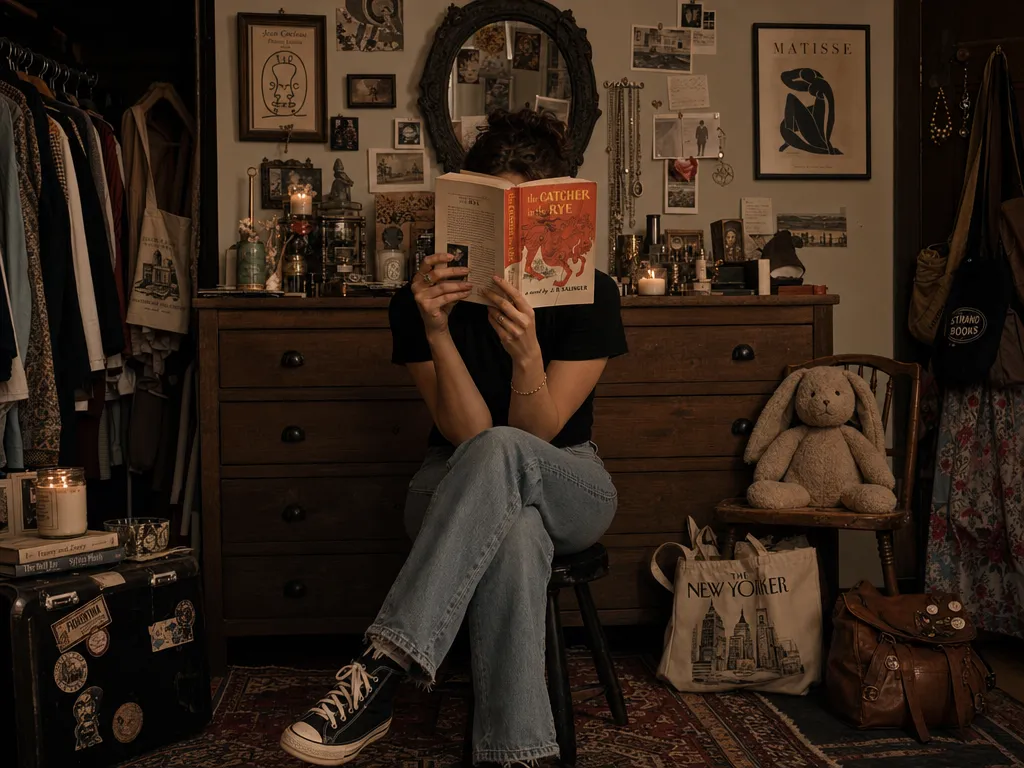

- Editorial illustration: "[Subject] in [setting]. [Mood/lighting]. Composition follows rule of thirds with the subject at [position]. [Art direction style], for [publication type]."

Common prompting mistakes

- Keyword stacking. "Beautiful amazing stunning gorgeous professional" teaches the model nothing. One specific style direction outperforms five adjectives.

- Conflicting instructions. "Minimalist design with lots of intricate details" forces the model to choose. Pick a direction.

- Ignoring aspect ratio. A landscape scene crammed into a portrait aspect ratio wastes compositional potential. Match the ratio to the content.

- Underspecifying text. "Add some text" is a recipe for garbled characters. Spell out every word, every font choice, every position.

Iteration strategy

GPT Image 2's subject fidelity means you can iterate without starting over. The most efficient workflow is:

1. Generate a first pass with a detailed prompt 2. Upload the result as a reference image 3. Describe specific changes — "move the headline 20% higher," "make the background warmer," "replace the placeholder text with '[exact new text]'" 4. Repeat until the image matches the brief

For rapid iteration where speed matters more than maximum fidelity, a speed-optimized alternative may be a better starting point for brainstorming before switching to GPT Image 2 for the final version.

The reasoning architecture means each iteration refines rather than rerolls. Your fifth generation builds on the first four instead of starting from scratch.